Currently Funded Projects

Human-Centred Robotics & Assistive Technology

STRANDS: Spatio-Temporal Representations and Activities for Cognitive Control in Long-Term Scenarios (EU FP7 IP, 600623)

STRANDS will produce intelligent mobile robots that are able to run for months in dynamic human environments. We will provide robots with the longevity and behavioural robustness necessary to make them truly useful assistants in a wide range of domains. Such long-lived robots will be able to learn from a wider range of experiences than has previously been possible, creating a whole new generation of autonomous systems able to extract and exploit the structure in their worlds.

L-CAS scientists in STRANDS are part of a European team of researchers and companies and contributes with their unique expertise in long-term mapping and behaviour generation in integrated robotic systems. Our robot developed in STRANDS is called “Linda“.

Contact: Tom Duckett & Marc Hanheide

ENRICHME: Enabling Robot and assisted living environment for Independent Care and Health Monitoring of the Elderly (EU Horizon 2020 RIA, 643691)

ENRICHME is a collaborative project, involving academic institutes, industrial partners and charity organizations across six European countries. It tackles the progressive decline of cognitive capacity in the ageing population proposing an integrated platform for Ambient Assisted Living (AAL) with a mobile robot for long-term human monitoring and interaction, which helps the elderly to remain independent and active for longer. The system will contribute and build on recent advances in mobile robotics and AAL, exploiting new non-invasive techniques for physiological and activity monitoring, as well as adaptive human-robot interaction, to provide services in support to mental fitness and social inclusion. Our research contribution in this project focuses in the area of robot perception and ambient intelligence for human tracking and identity verification, as well as physiological and long-term activity monitoring of the elderly at home. Primary tasks include developing novel algorithms and approaches for enabling the acquisition, maintenance and refinement of models to describe human motion behaviors over extended periods. as well as integration of the algorithms with the AAL system.

ENRICHME is a collaborative project, involving academic institutes, industrial partners and charity organizations across six European countries. It tackles the progressive decline of cognitive capacity in the ageing population proposing an integrated platform for Ambient Assisted Living (AAL) with a mobile robot for long-term human monitoring and interaction, which helps the elderly to remain independent and active for longer. The system will contribute and build on recent advances in mobile robotics and AAL, exploiting new non-invasive techniques for physiological and activity monitoring, as well as adaptive human-robot interaction, to provide services in support to mental fitness and social inclusion. Our research contribution in this project focuses in the area of robot perception and ambient intelligence for human tracking and identity verification, as well as physiological and long-term activity monitoring of the elderly at home. Primary tasks include developing novel algorithms and approaches for enabling the acquisition, maintenance and refinement of models to describe human motion behaviors over extended periods. as well as integration of the algorithms with the AAL system.

Contact: Nicola Bellotto (PI) & Shigang Yue & Oscar Martinez Mozos

FLOBOT: Floor Washing Robot for Professional Users (EU Horizon 2020 IA, 645376)

FLOBOT is a collaborative project, involving academic institutes and industrial partners across five European countries. The project will develop a floor washing robot for industrial, commercial, civil and service premises, such as supermarkets and airports. Floor washing tasks have many demanding aspects, including autonomy of operation, navigation and path optimization, safety with regards to humans and goods, interaction with human personnel, easy set-up and reprogramming. FLOBOT addresses these problems by integrating existing and new solutions to produce a professional floor washing robot for wide areas. Our research contribution in this project is focussed in the area of robot perception, based on laser range-finder and RGB-D sensors, for human detection, tracking and motion analysis in dynamic environments. Primary tasks include developing novel algorithms and approaches for enabling the acquisition, maintenance and refinement of multiple human motion trajectories for collision avoidance and path optimization, as well as integration of the algorithms with the robot navigation and on-board floor inspection system.

FLOBOT is a collaborative project, involving academic institutes and industrial partners across five European countries. The project will develop a floor washing robot for industrial, commercial, civil and service premises, such as supermarkets and airports. Floor washing tasks have many demanding aspects, including autonomy of operation, navigation and path optimization, safety with regards to humans and goods, interaction with human personnel, easy set-up and reprogramming. FLOBOT addresses these problems by integrating existing and new solutions to produce a professional floor washing robot for wide areas. Our research contribution in this project is focussed in the area of robot perception, based on laser range-finder and RGB-D sensors, for human detection, tracking and motion analysis in dynamic environments. Primary tasks include developing novel algorithms and approaches for enabling the acquisition, maintenance and refinement of multiple human motion trajectories for collision avoidance and path optimization, as well as integration of the algorithms with the robot navigation and on-board floor inspection system.

Contact: Nicola Bellotto (PI) & Tom Duckett

FInCoR: Facilitating Individualised Collaboration with Robots (RIF funding, 2014)

The FInCoR project will investigate novel ways to facilitate individualised Human-Robot Collaboration through long-term adaptation on the level of joint tasks. This will enable robots to work with human more effectively in scenario such as high value manufacturing and assistive care. Imagine a robot helping to assemble a car’s dashboard more effectively, knowing that it is working with a left-handed person; or a robot assisting an elderly employee in a car factory who is skilled in fitting a speedometer, but requires a third-hand holding the heavy mounting frame in place. Despite significant progress in human-robot collaboration, today’s robotic systems still lack the ability to adjust to an individual’s needs. FInCoR will overcome this limitation by developing online, in-situ adaptation, putting the “human in the loop”. It will bring together flexible task representations (e.g. Markov Decision Processes), machine learning (e.g. reinforcement learning), advanced robot perception (e.g. body tracking), and robot control (e.g. reactive planning) to make progress from pre-scripted tasks to individualised models. These models account for preferences, abilities, and limitations of each individual human through long-term adaptation. Hence, FInCoR will enable processes known from human-human collaboration, such as two colleagues working together and learning more about each other’s strengths, preferences, and strategies, to take place in human-robot teams.

Contact: Marc Hanheide & Peter Lightbody

Mobile Robotics for Ambient Assisted Living

Research Investment Fund (RIF), University of Lincoln

The life span of ordinary people is increasing steadily and many developed countries, including UK, are facing the big challenge of dealing with an ageing population at greater risk of impairments and cognitive disorders, which hinder their quality of life. Early detection and monitoring of human activities of daily living (ADLs) is important in order to identify potential health problems and apply corrective strategies as soon as possible. In this context, the main aim of the current research is to monitor human activities in an ambient assisted living (AAL) environment, using a mobile robot for 3D perception, high-level reasoning and representation of such activities. The robot will enable constant but discrete monitoring of people in need of home care, complementing other fixed monitoring systems and proactively engaging in case of emergency. The goal of this research will be achieved by developing novel qualitative models of ADLs, including new techniques for 3D sensing of human motion and RFID-based object recognition. This research will be further extended by new solutions in long-term human monitoring for anomaly detection.

Contact: Nicola Bellotto & Oscar Martinez Mozos

Active Vision with Human-in-the-Loop for the Visually Impaired

Google Faculty Research Award, Winter 2015

The research proposed in this project is driven by the need of independent mobility for the visually impaired. It addresses the fundamental problem of active vision with human-in-the-loop, which allows for improved navigation experience, including real-time categorization of indoor environments with a handheld RGB-D camera. This is particularly challenging due to the unpredictability of human motion and sensor uncertainty. While visual-inertial systems can be used to estimate the position of a handheld camera, often the latter must also be pointed towards observable objects and features to facilitate particular navigation tasks, e.g. to enable place categorization. An attention mechanism for purposeful perception, which drives human actions to focus on surrounding points of interest, is therefore needed. This project proposes a novel active vision system with human-in-the-loop that anticipates, guides and adapts to the actions of a moving user, implemented and validated on a mobile device to aid the indoor navigation of the visually impaired.

Contact: Nicola Bellotto (PI), Oscar Martinez Mozos & Grzegorz Cielniak

Agri-Food Technology

Trainable Vision‐based Anomaly Detection and Diagnosis (TADD)

Technology Strategy Board funded Technology Inspired CR&D – ICT Project

Market demand for automation of food processing and packaging is increasing, leading to a demand for increased automation of industrial quality control (QC) procedures. This project is developing a multi‐purpose intelligent software technology using computer vision and machine learning for automatic detection and diagnosis of faulty products, including raw, processed and packaged food products. The developed vision systems are user-trainable, requiring minimal set‐up to work with a wide variety of products and processes. The technology will be refined and evaluated by testing in automated QC equipment in the food industry.

Market demand for automation of food processing and packaging is increasing, leading to a demand for increased automation of industrial quality control (QC) procedures. This project is developing a multi‐purpose intelligent software technology using computer vision and machine learning for automatic detection and diagnosis of faulty products, including raw, processed and packaged food products. The developed vision systems are user-trainable, requiring minimal set‐up to work with a wide variety of products and processes. The technology will be refined and evaluated by testing in automated QC equipment in the food industry.

Contact: Tom Duckett

Autonomous Control of Agricultural Sprayers

Technology Strategy Board funded CR&D Feasibility Study

This project aims to improve the efficiency of agricultural spraying vehicles by developing a robust control system for the height of the spraying booms, using laser sensing to model the 3d structure of the crop canopy and terrain ahead of the vehicle. This new technology will enable greater autonomy in agricultural sprayers, enhance and simplify interaction between the driver and the vehicle, and result in an optimised spraying process.

Contact: Grzegorz Cielniak, Tom Duckett

Vision-based Identification of Beetle Damage in Field Beans

Technology Strategy Board funded CR&D Feasibility Study

The project aims to analyse field bean produce intended for human consumption for the larval damage caused by, and presence of adult Bruchus rufimanus (bean seed beetle). This will be achieved by employing state-of-the-art computer vision and machine learning techniques. The study will also consider the potential to develop hand-held instruments using the proposed technology that can be employed for rapid analysis of damage and insect presence.

Contact: Georgios Tzimiropoulos, Grzegorz Cielniak, Tom Duckett

Bio-Inspired Embedded Systems

EYE2E: Building visual brains for fast human machine interaction

In the real world, many animals pocess almost perfect sensory systems for fast and efficient interactions within dynamic environments. Vision, as an evolved organ, plays a significant role in the survival of many animal species. The mechanisms in biological visual pathways provide nice models for developing artificial vision systems. The four partners of this consortium will work together to explore biological visual systems in both lower and higher level by modelling, simulation, integration and realization in chips, to investigate fast image processing methodologies for human machine interaction through VLSI chip design and robotic experiments.

In the real world, many animals pocess almost perfect sensory systems for fast and efficient interactions within dynamic environments. Vision, as an evolved organ, plays a significant role in the survival of many animal species. The mechanisms in biological visual pathways provide nice models for developing artificial vision systems. The four partners of this consortium will work together to explore biological visual systems in both lower and higher level by modelling, simulation, integration and realization in chips, to investigate fast image processing methodologies for human machine interaction through VLSI chip design and robotic experiments.

Contact: Shigang Yue

LIVCODE: Life like information processing for robust collision detection

Animals are especially good at collision avoidance even in a dense swarm. In the future, every kind of man made moving machine, such as ground vehicles, robots, UAVs aeroplanes, boats, even moving toys, should have the same ability to avoid collision with other things, if a robust collision detection sensor is available. The six partners of this EU FP7 project from UK, Germany, Japan and China will further look into insects visual pathways and take inspirations from animal vision systems to explore robust embedded solutions for vision based collision detection for future intelligent machines.

Animals are especially good at collision avoidance even in a dense swarm. In the future, every kind of man made moving machine, such as ground vehicles, robots, UAVs aeroplanes, boats, even moving toys, should have the same ability to avoid collision with other things, if a robust collision detection sensor is available. The six partners of this EU FP7 project from UK, Germany, Japan and China will further look into insects visual pathways and take inspirations from animal vision systems to explore robust embedded solutions for vision based collision detection for future intelligent machines.

Contact: Shigang Yue

HAZCEPT: Towards zero road accidents – nature inspired hazard perception

The number of road traffic accident fatalities world wide has recently reached 1.3 million each year, with between 20 and 50 million injuries being caused by road accidents. In theory, all accidents can be avoided. Studies showed that more than 90% road accidents are caused by or related to human error. Developing an efficient system that can detect hazardous situations robustly is the key to reduce road accidents. This HAZCEPT consortium will focus on automatic hazard scene recognition for safe driving. HAZCEPT will address the hazard recognition from three aspects – lower visual level, cognitive level, and drivers’ factors in the safe driving loop.

The number of road traffic accident fatalities world wide has recently reached 1.3 million each year, with between 20 and 50 million injuries being caused by road accidents. In theory, all accidents can be avoided. Studies showed that more than 90% road accidents are caused by or related to human error. Developing an efficient system that can detect hazardous situations robustly is the key to reduce road accidents. This HAZCEPT consortium will focus on automatic hazard scene recognition for safe driving. HAZCEPT will address the hazard recognition from three aspects – lower visual level, cognitive level, and drivers’ factors in the safe driving loop.

Contact: Shigang Yue

Other Research Areas

Analysis and Generation of human-robot joint spatial behaviour

When humans and robots share space there is more to the interaction between them than verbal communication. For example spatial prompts can be non-verbal communicative cues. A motion with the entire machine or body (translational, rotational) can be a prompt and therefore is a means of communication.

When humans and robots share space there is more to the interaction between them than verbal communication. For example spatial prompts can be non-verbal communicative cues. A motion with the entire machine or body (translational, rotational) can be a prompt and therefore is a means of communication.

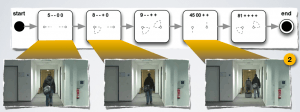

Having scenarios in mind in which a future robot should help in shops, homes, and offices, a robot needs to detect, react to non-verbal human cues. Based on situational proxemics and spatial concepts, the goal is to make human-robot interaction smooth, not only with users but also with bystanders, by using non-verbal human cues.

Envision a narrow passage (e.g. a doorway, hallway, or a small room) in which two persons have to manage the space around them, e.g., they want to walk in opposite directions. Usually in such situations, humans seem to be well capable of understanding their mutual intentions and negotiate non-verbally how they both achieve their goals, e.g., make room for each other in order to pass the narrow space.

contact: Nicola Bellotto & Marc Hanheide